Das Wichtigste in Kürze:

- Definition: Terms like GAIO, AIO, GEO, and LLMO refer to optimizing websites for LLMs and AI search engines to improve discoverability. LLMO aims to help companies position their brand, services, and products prominently in the outputs of leading generative engines such as ChatGPT, Google Gemini, and AI Overviews (formerly Google SGE).

- Why RAG matters: Retrieval-Augmented Generation (RAG) is a framework that improves the quality of LLM-generated answers by grounding the model in external knowledge sources. This supplements the model’s internal representation of information and reduces the need for constant retraining.

- Optimize for external sources: It’s more effective to improve how easily your content can be found in external knowledge sources that LLMs reference than to try to directly influence a model’s training data.

- Adapting SEO strategies: For effective on-page LLMO, content must be relevant and formatted in a way that stands out as a reliable answer in AI-enhanced search and LLM outputs. For off-page LLMO, presence on databases and aggregator sites plus digital PR is essential.

Generative experiences are coming whether your digital brand is ready or not:

- Google SGE (AI Overviews) and Google AI Mode were rolled out in the US and Europe

- More and more chatbots besides ChatGPT, such as Google Gemini, Microsoft Copilot, Grok, and Anthropic’s Claude, are emerging

So it’s high time you actively start optimizing for LLMs and chatbots. In this guide, you’ll learn:

- why optimization for language models is often misunderstood

- why SEO isn’t dying. It’s evolving, and has actually become even more important

- why Retrieval-Augmented Generation is the future

- and which concrete steps you need to take to drive more traffic from chatbots

Bereit?

-

Last updated: 6. February 2026ChatGPT Search (SearchGPT) Optimization: The Guide

Last updated: 6. February 2026ChatGPT Search (SearchGPT) Optimization: The Guide -

Last updated: 6. February 2026Google AI Overviews: What’s Changing for SEO & SEA in 2025

Last updated: 6. February 2026Google AI Overviews: What’s Changing for SEO & SEA in 2025 -

Last updated: 10. February 2026Understanding Google AI Mode: Overview & Functions

Last updated: 10. February 2026Understanding Google AI Mode: Overview & Functions -

Last updated: 28. April 2026SEO Trends 2026: Developing Strategies for the AI Era

Last updated: 28. April 2026SEO Trends 2026: Developing Strategies for the AI Era

What is Large Language Model Optimization (LLMO)?

A common definition you’ll find online

LLMO stands for Large Language Model Optimization. The term describes a set of practices designed to influence the answers produced by chatbots like ChatGPT, Google Gemini, and Claude, as well as LLM-based generative experiences like Google SGE and Perplexity AI.

One important thing to know about that definition:

There isn’t a single, universally accepted term for this practice yet. The most common labels right now include:

- Large Language Model Optimization (LLMO),

- Generative Artificial Intelligence Optimization (GAIO)

- Artificial Intelligence Optimization (AIO)

- Answer Engine Optimization (AEO)

- and Generative Engine Optimization (GEO).

Each of these terms implies slightly different approaches and scope.

Our definition of Large Language Model Optimization:

LLMO aims to help companies position their brand, services, and products prominently in the outputs of leading generative engines—such as ChatGPT, Google Gemini, and Google AI Overviews.

Why is the definition tricky?

A large language model’s training data can be influenced but you’d need to do it at massive scale to see any measurable effect. For most companies, that’s neither realistic nor economically attractive. That’s why I’m intentionally leaving that angle out.

The real optimization lever, based on what we’ve seen so far, is Retrieval-Augmented Generation (RAG)…

Retrieval Augmented Generation (RAG)

Large language models sometimes hit the nail on the head and other times they output random-sounding information pulled from their training data. If they occasionally sound like they have no idea what they’re talking about, that’s because they genuinely don’t.

LLMs understand statistical relationships between words, not what those words mean.

What is Retrieval Augmented Generation (RAG)?

RAG is a framework for improving the quality of LLM-generated answers: the model is grounded in external knowledge sources to supplement what the LLM “knows” internally.

Implementing RAG in an LLM-based Q&A system has two main benefits:

- The model gains access to current, reliable facts.

- Users can see the sources behind the model’s answer, making it possible to verify accuracy and therefore trust the output more.

RAG also reduces the need to constantly retrain the model and update its parameters whenever information changes.

RAG explained in plain English

Showing up in the output of a “static” LLM might be valuable for brand visibility but it won’t drive traffic to your website.

Platforms like ChatGPT with internet access improve LLM output by pulling current, relevant content from search indexes (an external knowledge source). That retrieval process is what we call RAG.

It’s the combination of the LLM’s language capabilities with a curated set of highly relevant web sources that enables the chatbot to produce answers that are more up-to-date and factually accurate.

What you should take away

Because of how LLMs work, LLM-based chatbots will always need some form of search or retrieval to deliver current, accurate answers. That’s why ChatGPT uses Bing. SEO isn’t becoming less relevant. It’s gaining a whole new arena.

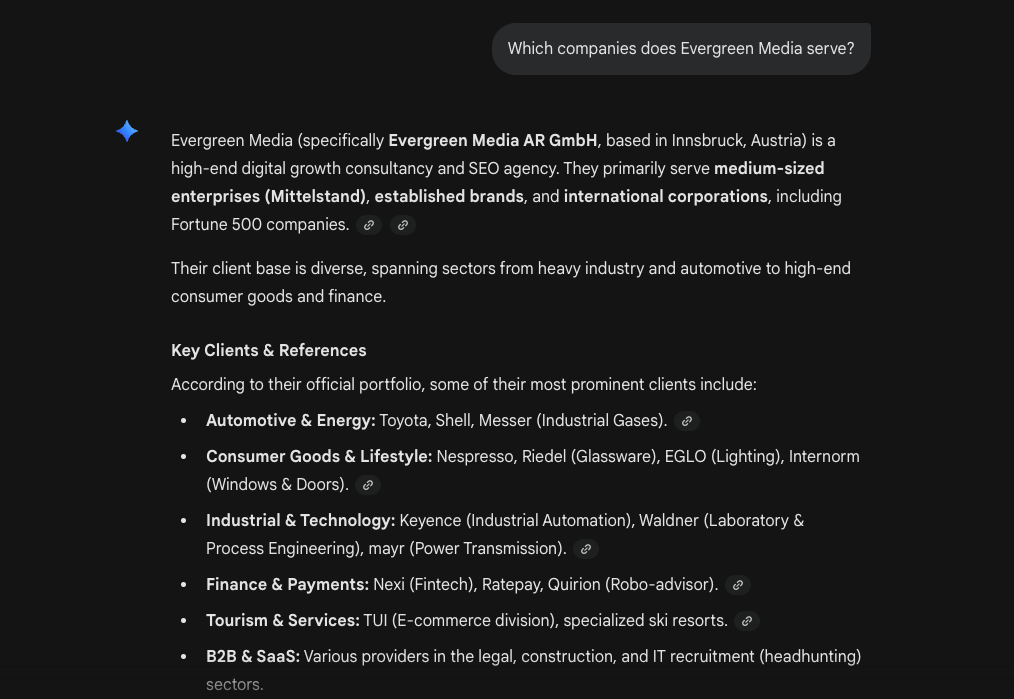

An Example of RAG

The following screenshots show how Retrieval Augmented Generation can look in practice:

This information isn’t part of GPT-4’s training data. Instead, ChatGPT retrieved it via Bing and then used the language model to generate the final wording.

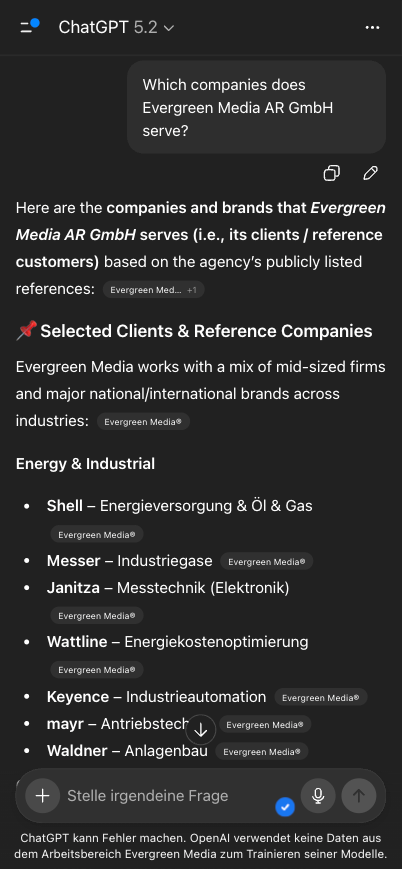

And here’s another example from Google Gemini:

I was able to influence this answer by adding a few structured pieces of information in the footer (not structured data).

Can you optimize for RAG-based large language models?

Optimizing for RAG-enabled LLMs is similar to optimizing for featured snippets, just with more variables and less predictability. Success depends on matching (or outperforming) the format and relevance of already high-performing content.

Principles for optimizing for large language models

1. Influencing LLM training data is theoretically possible but only at massive scale.

For most companies, that’s not realistic. And even if you could do it, the outcomes aren’t controllable.

LLMs aren’t constantly retrained. They use RAG to stay current.

So the lever for businesses isn’t manipulating training data, but improving discoverability in the external knowledge sources these systems retrieve from.

3. Optimization is about answers, not URLs.

In SEO, we split pages by search intent and keywords. In GAIO, everything revolves around answers.

Topic hubs may become more important again. After all, in chatbots and AI-enhanced search, the smallest unit isn’t a URL. It’s an answer.

4. We optimize discoverability in the external sources that power RAG.

In practice, that means:

- For ChatGPT, we optimize for Bing Search.

- For Gemini and AI Overviews, we optimize for Google Search.

If you’re highly discoverable in search engines, that visibility tends to carry over into chatbots.

5. Chatbot queries are more complex and nuanced so keyword research and answer coverage must evolve.

Classic keywords will mostly become pillars that you build question-and-answer coverage around.

You can find the right questions via:

- “People Also Ask” (PAA)

- follow-up questions from conversations with Google, Bing, ChatGPT, and Perplexity

- customer interviews

- sales conversations

In this episode, you’ll learn more about keyword research in the era of generative engines:

OffPage-LLMO

Specifically, outside of your own website, this is about:

- Database websites: e.g., Crunchbase, Sortlist, or FirmenABC

- Knowledge aggregators: large, moderated UGC websites like Reddit, LinkedIn, YouTube, and Wikipedia

- Large publishers: like The Verge, Financial Times, or Forbes

Our Goal:

- We gain visibility because mentions on these platforms can be pulled directly via RAG. Example: we show up in a list of the best GAIO agencies in Germany.

- We make it into LLM training data through long-term, stable presence on these platforms.

What do you need to watch out for?

The external site/platform must not be blocking the relevant bot if you want to use it as part of your optimization strategy.

What do you need to consider?

Here you’ll find a list of large websites and which bots they’ve blocked. And here’s another list with domains Google trusts.

| What is this? | Database websites are classic aggregators with some kind of listings, e.g., business directories, review platforms, etc. |

| Examples: | sortlist.de |

| What to do? | – Create a complete profile for your brand – Rise as high as possible in the platform’s ranking: e.g., appear high up on the “Best SEO Agencies in Innsbruck” page |

| What is this? | Platforms where you can publish content that’s moderated. |

| Examples: | youtube.com wikipedia.org |

| What to do? | – Actively participate as a brand on the platforms. – Play each platform’s game well: e.g., build a popular YouTube channel |

| What is this? | Newspapers, magazines, etc. |

| Examples: | theverge.com forbes.com |

| What to do? | – Publish sponsored content to buy your place – Convince journalists to report on your brand with online PR campaigns |

That’s why I’m also pushing the topic of digital PR so much. Linkbuilding is nice, but low-quality articles from Private Blog Networks (PBN) won’t help you here.

If you want to generate visibility with AI-enhanced search engines and large language models, you must be mentioned again and again in large publishers. I explicitly say mentioned because it’s not about backlinks.

It’s about prominence for direct queries (ChatGPT via browsing, i.e., RAG) and enough mentions to possibly stand out in the training data.

OnPage-LLMO

Some people will tell you to optimize for individual answers. I doubt that’s the smartest long-term path.

Take AI Overviews as an example.

iPullRank writes about their experiments:

The more saturated the topic or vague the query, the more volatility we’ll see in the results day to day.

Garret Sussman, iPullRank, 6. Februar 2024

That doesn’t sound like a scalable strategy or a good use of time. Instead, we want to optimize all of our content around LLMO best practices to increase the probability of showing up in chatbots.

Pointless busywork is still pointless.

Your Framework

- It always comes down to relevance and format.

- We build question-and-answer coverage around our classic keyword pillars.

- Instead of giving users a 4,000-word article about the calories in apples, we give them the answer fast and clearly.

Language

- Use simple, clear, information-dense language (think: what works in featured snippets).

- Explain technical terms in a structured format, short and sharp.

Structure

- Aim for “ranch-style” content, not skyscraper content.

- Start long-form content with a short summary of the key takeaways.

- Start every chapter with a short, direct answer.

- Format content so it can be used as an answer (this depends on the model):

– length

– formatting

– information density - Use proven response patterns like pros/cons, comparisons, and frameworks.

- Structure your website based on how an LLM structures your topic.

- Use structured data.

Content

- Look at what answers are currently being surfaced and learn from them in terms of format and relevance.

- Provide unique information or data that isn’t already in training data or on competing pages (information gain).

- Add quotes (e.g., from recognized experts or institutions).

- Include statistics and quantitative data.

- Cite authoritative sources.

Preparation

- Collect user-generated content (UGC) and synthesize the insights in a citation-ready format i.e., as a helpful answer.

Comment